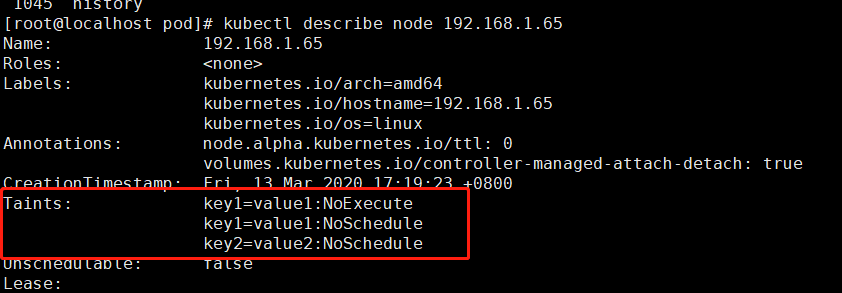

Pay attention to the rules defined in the applied taints. You also can separate your applicative pods from those of your secondary applications. With dedicated nodes you can create a node pool with very specific parameters(for example high CPU) and use these nodes only for specific applications. Taint and toleration are useful if you want to work with dedicated nodes. Now that your nodes are tainted, here is an example to add tolerations to your pod: To show taint of your node you can run this command: $ kubectl taint nodes node-name key=value:effectĮxample: $ kubectl taint nodes node-main taint=test:PrefereNoSchedule To add a taint to an existing node, you can run the following command: Taint=test:NoExecuteIt will exclude all pods which have toleration that doesn’t match the taint. DEV Community is a community of 878,326 amazing developers Were a place where coders share, stay up-to-date and grow their careers. But Pod2, which has toleration that match the taint, can be affected on Node1. taints, tolerations, nodeselector in aks (azure kubernetes services) explained in plain english. You can see on the schema below, “new pod doesn’t have toleration, so it has been affected on Node2. This means if the pod doesn't match this taint, it can’t be scheduled on this node. First, when there is no taint, pod can be affected to any node(here Pod1 is attached to Node1, but it could also be on Node2). If the taint isn’t present on the node, “effect is applied to the pod. Which means that if you change the operator by “exist you don’t have to give a value. The default value for the operator is “ Equal.

Taints are a property of nodes that push pods away if they don't tolerate this taint. For each node, you specify the path, i.e. The key must be unique across all node pools for a given cluster.First, let’s check what is exactly taints and toleration in Kubernetes. Each node pool can have a set of key/value labels defined. Labels can be attached to node pools in a cluster during creation and can be subsequently added and modified at any time. These labels do not directly imply anything to the semantics of the core system but are intended to be used by users to drive use cases where pod affinity to specific nodes is desired. Palette enables our users to Label the nodes of a master and worker pool by using key/value pairs.

WHAT IS KUBERNETES TAINT FREE

spread your pods across nodes so as not place the pod on a node with insufficient free resources, etc.) but there are some circumstances where you may want to control which node the pod deploys to - for example to ensure that a pod ends up on a machine with an SSD attached to it, or to co-locate pods from two different services that communicate a lot into the same availability zone. Generally, such constraints are unnecessary, as the scheduler will automatically do a reasonable placement (e.g. There are several ways to do this and the recommended approaches such as, nodeSelector, node affinity, etc all use label selectors to facilitate the selection. You can constrain a Pod to only run on a particular set of Node(s).